Sometimes brute forcing just works

Marton Trencseni - Thu 06 May 2021 - Machine Learning

Introduction

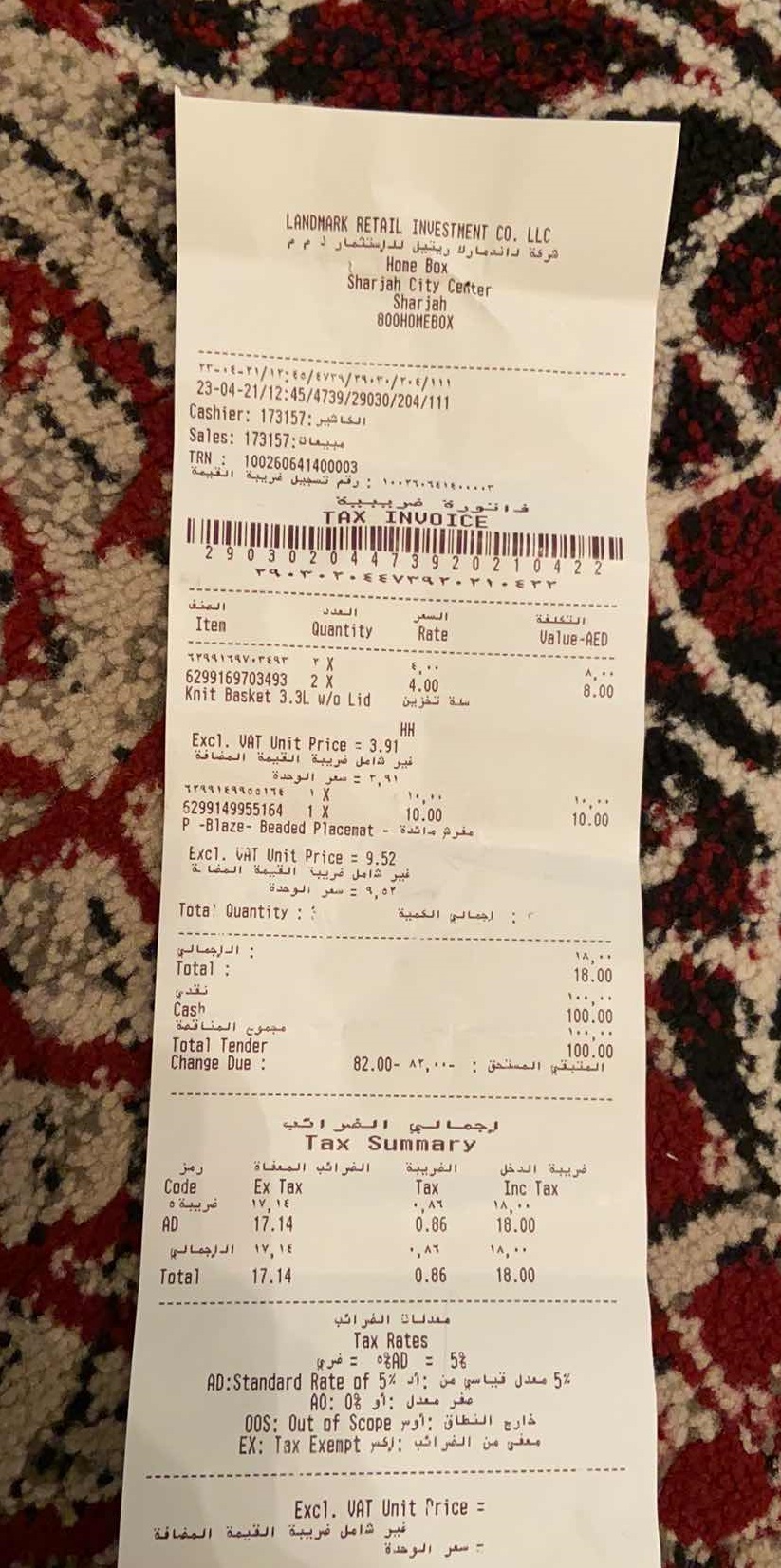

Recently I was working on the problem of "parsing" store receipts. The customer takes a picture of the receipt using their smartphone and submits it, and it is our job to extract certain core fields from the receipt, such as the total amount or the date of the receipt. We had an existing solution which used a state of the art, but not receipt specific OCR tool to convert the image to a chunk of text, and then used various regular expressions to extract these fields --- however the extraction rate and accuracy only 70-80% for each extracted field. I started working on this as a weekend hack to see whether I can improve the accuracy of the existing solution.

I will describe how I cracked extracting the date field, where a surprisingly simple brute force search base approach worked, without any machine learning. It's a good lesson to remember that sometimes relatively simple searching and string manipulation works, and we don't need to go to gradient boosting or neural networks.

It's fair to ask: isn't this a solved problem? Aren't there out of the box solutions for receipt parsing? I thought the same thing, so as a zeroth step we looked at 3-4 different SaaS offerings, but they had very poor recognition rates (in the 50-60% range), possibly because of our arabic text, and because we don't currently enforce quality standards on the uploaded images (eg. many are blurry, etc).

Brute force search

Since I was hacking, I decided to first stick with the existing OCR approach, before moving on to more fancy deep learning on the image itself. First I did "data exploration", which in this case means looking at a lot of the receipt pictures and the corresponding OCR text to get a sense of the problem. I was able to do this, because the existing solution has been in production for a while, so we have more than a million labeled samples (there is a team of humans correcting and labeling low confidence results).

An additional difficulty here was that most of the invoices also had arabic text on them, which tripped off the OCR software, so sometimes it switched from left-to-right to right-to-left word ordering in the text output. Also, the OCR software is not specific to invoices, so sometimes it got confused and thought the invoice is like a column of text in a newspaper, and parsed out the line items ("t-shirt") on the left of the invoice as a block of text, and then all the amounts on the right. This means that the resulting OCR string was very "dirty", very hard to "read" and see patterns in it.

LANDMARK, RETAIL INVESTMENT CO. LLC مركة داند مارلا وپتيل للاستثمار ذ

م Hone Box Sharjah City Center Sharjah 800HOMEBOX الكاشي : 173157

:Cashier بیان :173157 :Sales ۲۲۰۱۶-۲۱/۱۴ ۹۶/۶۷۴۹/۳۰۹۰۳۰/۲۰۶/۱۱۱

23-04-21/12:45/4739/29030/204/111 : : : TRN: 100260641400003 ۱۰۰۳۶۰۶۱۹۰۰۰۰۳

: وتم تسجيل ضريبة القيمة هم ببیة فاتورة TAX INVOICE 29 0 3 0 2 0 4 4 7 3 9 2 0 2 1 0 4 2 2

۹۰۳۰۳۰ ۷۳ ۲۱۰۳۲ الصنف العدل السعر Iten Quantity Rate Value-AED وو؟ ۳۹۹۱۹۹۷۰۳۹۹۳ ۴X

6299169703493 2 X Knit Basket 3.3L w/o Lid , 4.00 8.00 سنة تخز بین

HH Excl. VAT Unit Price = 3.91 غير شامل ضريبة القيمة المضافة ۳,۹۱ = سعر الوحدة مفرد مائدة -

P -Blaza - Beaded Placetat ۶۴۹۹۱۹۹۹:۱۶ ۱۷ ۱۰,۰۰ 5299149955164 1X 10.00 P .

Excl. VAT Unit Price = 9.52 10.00 غير شامل ضريبة القيمة المضاة ۹,۵۲ = سعر الوحدة إجمالي الكمية

Total Quantity :: : الاجمالي Total : 18.00 نقدی Cash مجمل المناقصة 100.00 و ۱۰۰

Total Tender Change Due : 100.00 المتبقي المستحق : ۸۳,۰۰۰ -82.00 اجمالي الفواتي

Tax Summary رمز الضرائب المعفاة الضريبة ضريبة الدخل Code غربیبة ه

Ex Tax ۱۷,۱ 17.14 Tax ۰,۸۹ 0.86 ,۸۶ 0.86 Inc Tax ۱۸,۰۰ 18.00 AD ۱۴ ,۱۷ الإجمالي

Total 17.14 18.00 معدلات الضرائب Tax Rates = %: 5% 5: % 5 % :5%AD = ضري 5 % معدل تباسي من أن %5 AD :

Standard Rate of عفري معدل :أو 04_ :40 خارج النطاق : أو 00s :

Out of Scope معني من الضرائب : أكسر EX : Sax Exempt Excl.

VAT Unit Price : غير شامل فر بيبة القيمة المضافة سعر الوحدة

Looking at the invoices, I noticed:

- Almost every one had the date in a different format, from

2020-12-24to12-24-2020to2020 Dec'24toDecember 24, 2020. - Because the text was the result of OCR, and the pictures were not clear, little characters like

,.'were not reliably parsed. - However, putting aside the dirtyness of the little characters, once I found the date on the invoice image, I was usually able to find it in the OCR text.

- A big "insight" was that the date of the receipt was always very close to the date of submission (=the date when the user took the picture and uploaded it), and the date of submission is known at parse time.

So I tried a simple thing. I made a list of 10-20 Python format strings for datetime.strftime(), like %Y-%m-%d, and tried the following logic:

def extract_date(text):

formats = ['%Y-%m-%d', ...]

previous_days = self.get_previous_days(submission_day, num_days)

# previous_days is ordered to start at submission_day, and go back in time

for dt in previous_days:

for fmt in formats:

ds = dt.strftime(fmt)

if ds in text:

return dt # found it!

return None

To my big surprise, this worked very well. For the cases where it returned something (not None), it correctly found the date at 95%+ accuracy!

So I went further down this path, and started adding more and more date formats. I quickly realized that instead of manually generating the date formats, I should have the computer do that, too. So I wrote a simple loop to generate lots of possible date formats, with different orderings, dividers and y/m/d formats:

year_formats = ['%Y', '%y']

month_formats = ['%m', '%b', '%B']

day_formats = ['%d']

dividers = ['-', '/', '\\', ...]

orderings = ['Y-M-D', 'D-M-Y', 'M-D-Y']

# generate all possible format strings and test

formats = []

for fmt, divider, year_format, month_format, day_format in product(orderings, dividers, year_formats, month_formats, day_formats):

fmt = fmt.replace('-', divider)

fmt = fmt.replace('Y', year_format)

fmt = fmt.replace('M', month_format)

fmt = fmt.replace('D', day_format)

formats.append(fmt)

This approach continued to work well. Eventually I was at ~80% recognition rate, still at ~95% accuracy. At this point I was finding receipts which had formats which I couldn't generate with datetime.strftime(), so I came up with a minimal wrapper around it, which let me add my own formattings. And finally, for the final few cases I found, I manually extended the generated date format list. Overall, in the end I had a list of 100s of date formats to try.

Finally, add to this some lower/uppercasing, logic to handle cases when multiple candidates are found, and some logic to handle cases when spaces and small characters are lost in the OCR process, I arrived at a very competetive solution.

Conclusion

This brute force, search based method makes a prediction for ~97% of cases, and gets ~95% accuracy, outperforming our current solution by a very wide margin. Because of the repeated string searches (Python substring in string in a loop) it's a bit slow, but it's not worth it to optimize, since it can still process 1000s of requests per second, but we only need to process around 1-10 per second — so optimizing the string searches or rewriting in C++ would be premature optimization.