Multi-Paxos with Disk Persistence

Marton Trencseni - Sun 22 February 2026 - Distributed

Introduction

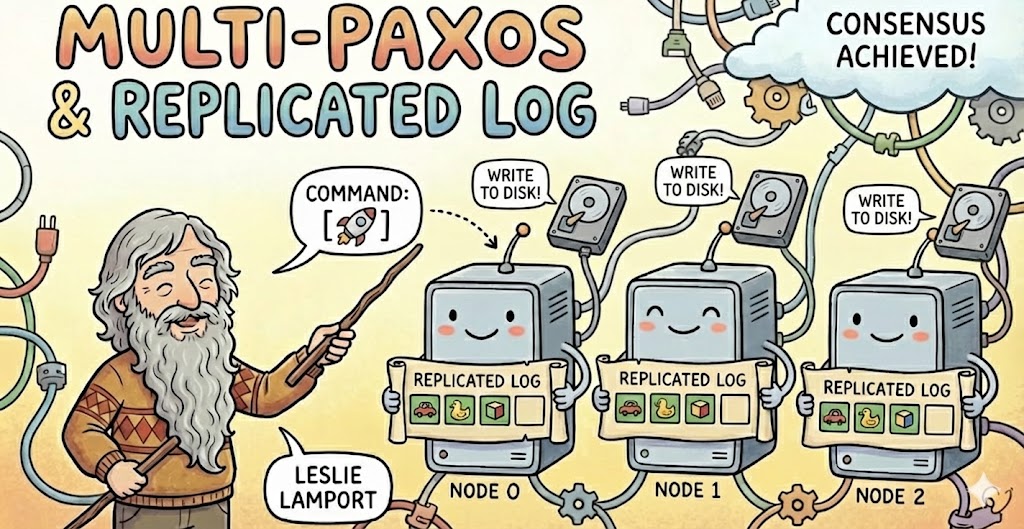

In previous Bytepawn articles, we built a compact implementation of Paxos and then extended it into a simple Multi-Paxos replicated log. The first version demonstrated single-decree consensus: how a cluster of nodes can agree on one value despite crashes, message re-ordering and delays. The second version ran Paxos repeatedly, slot by slot, to build a replicated log of commands that drove a toy in-memory database. Together, those posts showed how consensus evolves into a replicated state machine:

However, both implementations shared a critical limitation: all state lived in memory. If a node restarted, it forgot its promises, its accepted proposals, and its learned commands. As long as processes stayed alive, everything behaved correctly. But Paxos is explicitly designed to tolerate crashes and restarts. If restarting a node destroys its state, we are no longer faithfully implementing Paxos — we are running something that only resembles it during uptime. This article closes that gap by adding minimal, explicit persistence while keeping the implementation small and pedagogical.

The code is available on Github.

Persisting to Disk Is Essential in Paxos

Paxos’ safety guarantee depends on acceptors remembering what they have promised and accepted. The central invariant of the algorithm is that once a value has been chosen — meaning accepted by a majority — no different value can ever be chosen for that same round. This guarantee relies on acceptors refusing to violate earlier commitments. If they forget those commitments after a crash, the safety guarantee collapses.

Consider what happens if an acceptor crashes and restarts without remembering its highest promised proposal number. A new proposer might convince it to accept a proposal that contradicts a value previously accepted by a majority. In effect, the restarted node behaves as if history never happened. That behavior directly violates Paxos’ assumptions. Therefore, persistence is not an optimization or operational improvement; it is part of the correctness model. Acceptors must preserve their per-round state across crashes, or the algorithm’s guarantees do not hold.

Learners, while not responsible for safety in the same way as acceptors, also benefit from durability. If learners persist the chosen values of each round, the replicated log becomes the authoritative history of the system. On restart, a node can reconstruct its local database by replaying that history.

Design Decision: Replay the Log Instead of Snapshots

When introducing durability into a replicated log, there are two common design choices. The first is snapshotting: periodically persist the entire database state to disk. Upon restart, the node loads the snapshot and resumes operation. This approach is efficient and common in production systems, but it introduces additional complexity around snapshot timing, consistency, and truncation of old log entries.

The second approach is log replay, which we choose here for simplicity. Instead of persisting the database directly, we persist each chosen command as part of the log. On restart, the node reloads the commands in order and replays them to reconstruct its state. This design keeps the mental model simple: the log is the ground truth, and the database is a derived artifact. It also aligns perfectly with the replicated state machine model introduced earlier. While replay may become slow if the log grows very large, it keeps the implementation minimal and transparent — ideal for this educational setting.

Code Changes

Changes in PaxosAcceptor. The most important persistence changes occur in the PaxosAcceptor. For each round, the acceptor now writes its state to disk in a file such as acceptor.<node_id>/<round_id>.json. That file contains the round’s promised_n, accepted_n, and accepted_value. Every time the acceptor updates its state during a prepare or propose phase, it immediately writes the updated JSON to disk using an atomic rename pattern, before sending off reply messages. This ensures that even if the process crashes mid-write, the file system will contain either the old valid state or the new valid state — never a partially written file.

An example JSON file for the Acceptor:

{"promised_n": 256, "accepted_n": 256, "accepted_value": "li = []"}

On startup, the acceptor scans its directory and reconstructs the in-memory rounds dictionary from the saved JSON files. In the context of Multi-Paxos, it is only necessary to re-construct the last, highest numbered round's Acceptor state; for simplicity, all available rounds are loaded from disk. Note that Acceptor states on disk may have gaps, since our implementation supports lagging nodes catching up by directly learning from more up-to-date Learners, in which case their Acceptors never store state data for these caught up rounds.

class PaxosAcceptor:

...

def _persist_round(self, round_id, st):

atomic_write_json(self._round_path(round_id), {

"promised_n": st.promised_n,

"accepted_n": st.accepted_n,

"accepted_value": st.accepted_value,

})

def load_persisted(self):

# Load acceptor.<id>/*.json into self.rounds

if not self.dir.exists():

return

for p in self.dir.glob("*.json"):

try:

rid = int(p.stem)

data = json.loads(p.read_text(encoding="utf-8"))

self.rounds[rid] = SimpleNamespace(

promised_n=data.get("promised_n"),

accepted_n=data.get("accepted_n"),

accepted_value=data.get("accepted_value"),

)

except Exception:

pass

def on_prepare(self, round_id, proposal_id):

with self._lock:

st = self._get_round_state(round_id)

if st.promised_n is None or proposal_id > st.promised_n:

st.promised_n = proposal_id

self._persist_round(round_id, st) # <----------------------------- persist to disk before replying

success = True

else:

success = False

return success, st

def on_propose(self, round_id, proposal_id, value):

with self._lock:

st = self._get_round_state(round_id)

if st.promised_n is None or proposal_id >= st.promised_n:

st.promised_n = proposal_id

st.accepted_n = proposal_id

st.accepted_value = value

self._persist_round(round_id, st) # <----------------------------- persist to disk before replying

success = True

else:

success = False

return success, st

Changes in PaxosLearner. The learner’s persistence mirrors that of the acceptor but serves a different purpose. For each round, the learner stores the chosen_value in learner.<node_id>/<round_id>.json. When a new value is learned, it is first written to disk and then applied to the local database via exec(). Persisting before execution ensures that a crash after learning does not erase the command from history.

On startup, the learner loads all stored round files, sorts them by round number, and replays each command in order to rebuild the database from scratch. The database itself is not persisted; it is reconstructed deterministically from the log. This makes the implementation conceptually clean: the database is simply the cumulative result of executing the log. The learner also checks that there are no gaps in the stored rounds before replaying, ensuring that the local history forms a valid prefix.

Helper Functions. A few small helper functions make durability reliable. An atomic JSON writer ensures that writes are safe even under crashes. It writes to a temporary file and then renames it into place, leveraging the atomicity guarantees of the file system. This prevents torn writes and corrupted state.

def atomic_write_json(path, obj):

path.parent.mkdir(parents=True, exist_ok=True)

tmp = path.with_suffix(path.suffix + ".tmp")

tmp.write_text(json.dumps(obj, sort_keys=True), encoding="utf-8")

os.replace(tmp, path) # atomic on POSIX

We also add a “no holes” verification helper that checks whether the loaded round IDs form a contiguous sequence. This guard protects against accidental file deletion or disk corruption. While simple, these helpers transform the system from a memory-only demo into something that respects Paxos’ durability assumptions:

def assert_contiguity(name, round_ids):

rids = sorted(set(round_ids))

if not rids:

return

expected = list(range(rids[-1] + 1))

if rids != expected:

missing = sorted(set(expected) - set(rids))

extra = sorted(set(rids) - set(expected))

raise RuntimeError(

f"{name}: error reading back round states, ids not contiguous\n"

f"expected={expected} actual={rids} missing={missing} extra={extra}"

)

Interesting Execution Traces with Restarts

With persistence in place, several interesting crash scenarios behave correctly. Suppose a node crashes immediately after promising a proposal but before responding to the proposer. Upon restart, it reloads its promise from disk and continues to reject lower-numbered proposals. Safety is preserved even though the crash occurred mid-protocol.

Consider another case where a learner crashes after learning a value but before replying to a client. On restart, it reloads the learned value from disk, replays the command, and resumes with a consistent database. It does not need to rely on peers to reconstruct its state; recovery is local and deterministic.

Finally, imagine a full cluster shutdown. All nodes stop simultaneously. After restart, each node reloads its acceptor and learner state and replays its log. The entire replicated database reappears exactly as before shutdown. Without persistence, this scenario would erase all progress. With persistence, the system behaves like a durable distributed database.

Conclusion

Adding persistence to Multi-Paxos does not change the algorithm’s logic. The prepare and propose phases are identical, and the replicated log remains the driving mechanism of state machine replication. What changes is the durability of memory. Acceptors now truly remember their promises, and learners preserve the history that defines the database.

This step bridges the gap between a teaching implementation and a correct Paxos system. The code remains compact, but it now satisfies one of Paxos’ core assumptions: that certain pieces of state survive crashes. From here, further improvements such as snapshots, log compaction, and leader optimization can be layered on top. But the essential transition has already occurred: the replicated log is no longer ephemeral. It survives restarts, just as Paxos intended.

In the next entry in the series on Paxos, I will merge Paxos and PaxosLease to get Paxos implementation with a leader (master).