Exploring Lamport's Bakery algorithm in Python

Marton Trencseni - Fri 11 July 2025 • Tagged with lamport, bakery, python, flask, distributed

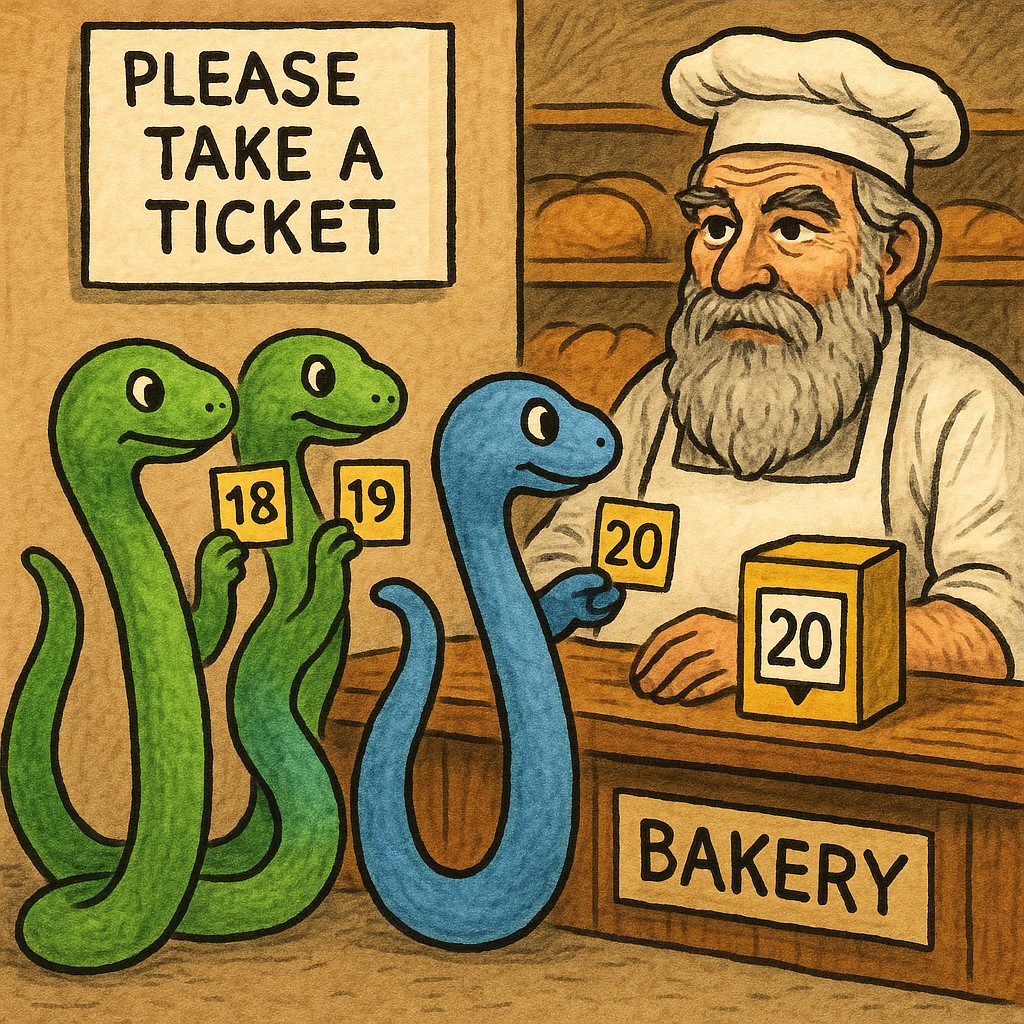

This post shows how to implement Lamport’s Bakery algorithm with Python HTTP Flask servers. The Python code is short and readable, helping newcomers grasp the algorithm’s logic without the syntactic overhead of C++. The asynchronous HTTP flow also generates plenty of overlap between workers, so the need for mutual exclusion is obvious. Exposing the variables through REST endpoints enables observation of ticket picking and critical section entry in real time, potentially making the flow of the algorithm easier to understand.