Paper: The Unreasonable Effectiveness of Monte Carlo Simulations in A/B Testing

Marton Trencseni - Thu 05 December 2024 • Tagged with ab-testing

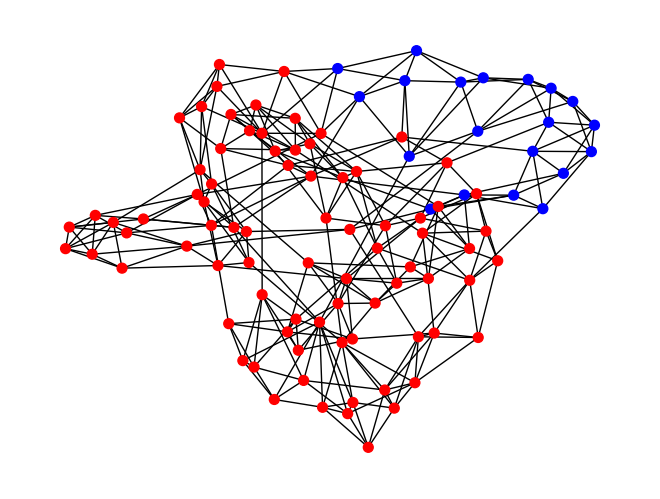

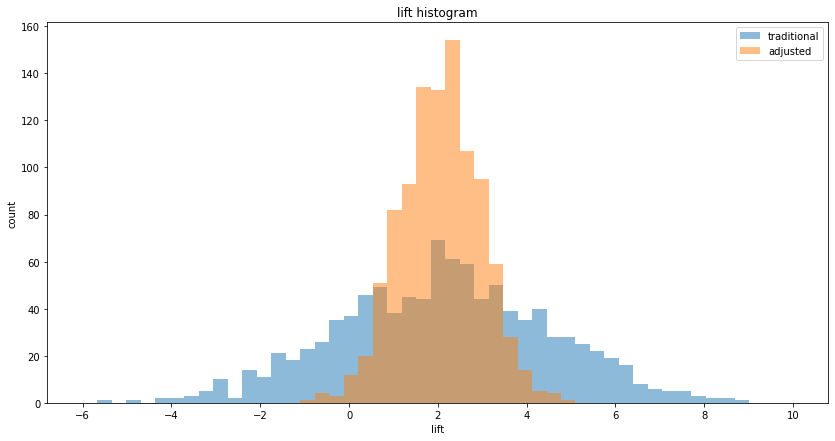

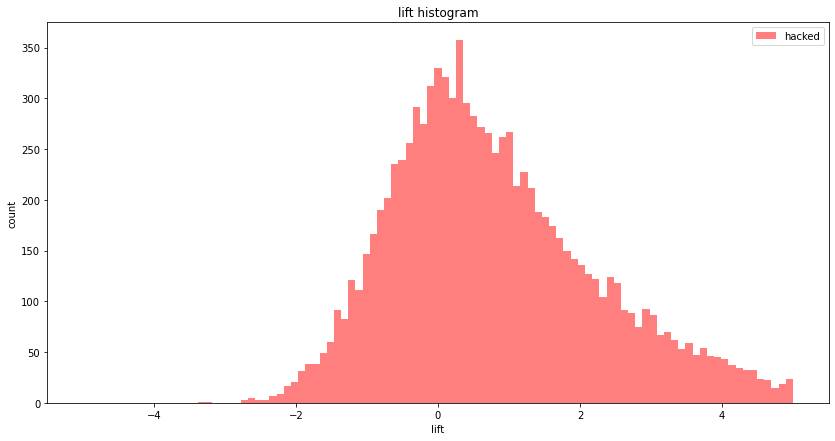

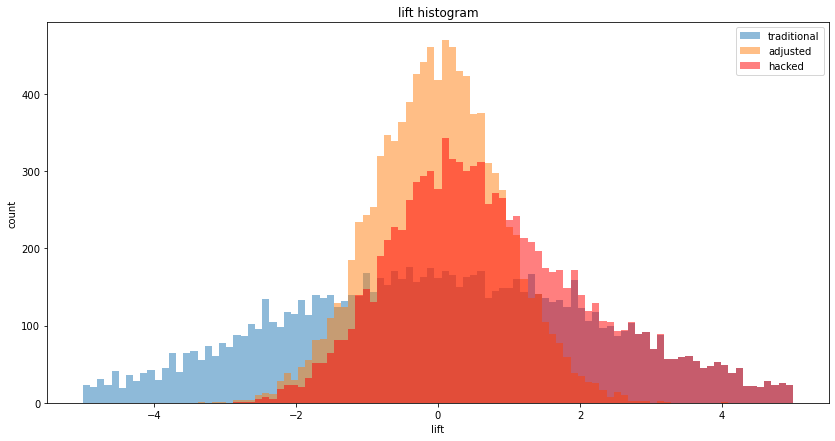

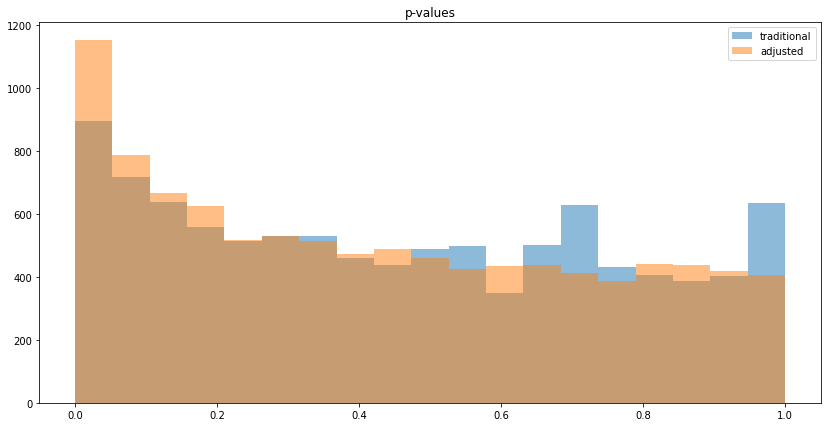

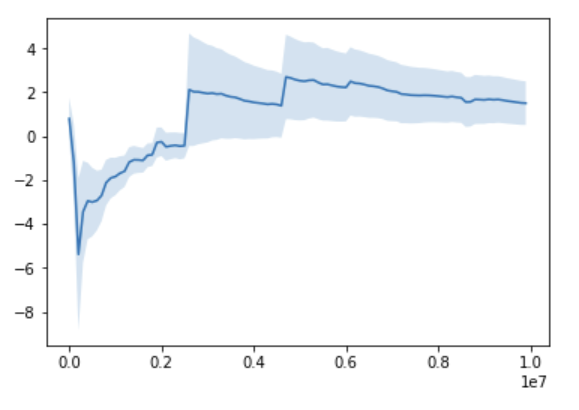

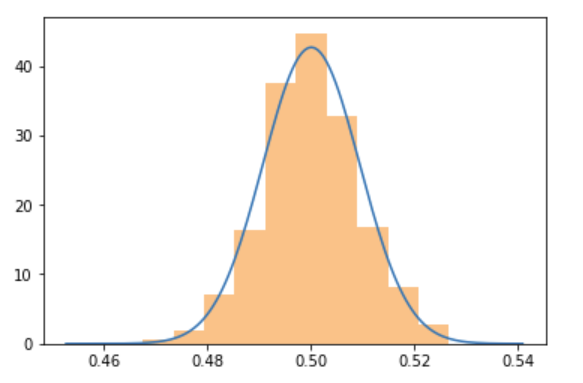

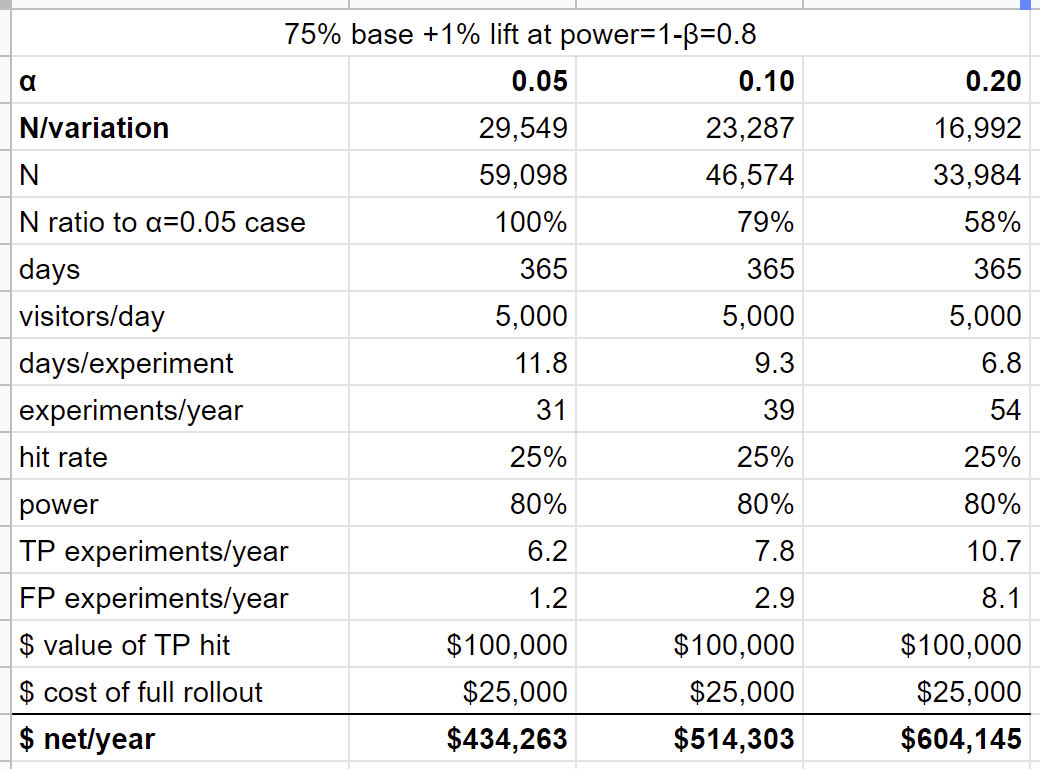

I run Monte Carlo simulations of content production over random Watts-Strogatz graphs to show various effects relevant to modeling and understanding Randomized Controlled Trials on social networks: the network effect, spillover effect, experiment dampening effect, intrinsic dampening effect, clustering effect, degree distribution effect and the experiment size effect. I will also define some simple metrics to measure their strength. When running experiments these potentially unexpected effects must be understood and controlled for in some manner, such as modeling the underlying graph structure to establish a baseline.